AI doesn't understand anything, it's just producing a linguistically coherent answer that may or may not be right. Stop looking to LLMs for answers if you care about whether those answers are correct or not

Australia

A place to discuss Australia and important Australian issues.

Before you post:

If you're posting anything related to:

- The Environment, post it to Aussie Environment

- Politics, post it to Australian Politics

- World News/Events, post it to World News

- A question to Australians (from outside) post it to Ask an Australian

If you're posting Australian News (not opinion or discussion pieces) post it to Australian News

Rules

This community is run under the rules of aussie.zone. In addition to those rules:

- When posting news articles use the source headline and place your commentary in a separate comment

Banner Photo

Congratulations to @Tau@aussie.zone who had the most upvoted submission to our banner photo competition

Recommended and Related Communities

Be sure to check out and subscribe to our related communities on aussie.zone:

- Australian News

- World News (from an Australian Perspective)

- Australian Politics

- Aussie Environment

- Ask an Australian

- AusFinance

- Pictures

- AusLegal

- Aussie Frugal Living

- Cars (Australia)

- Coffee

- Chat

- Aussie Zone Meta

- bapcsalesaustralia

- Food Australia

- Aussie Memes

Plus other communities for sport and major cities.

https://aussie.zone/communities

Moderation

Since Kbin doesn't show Lemmy Moderators, I'll list them here. Also note that Kbin does not distinguish moderator comments.

Additionally, we have our instance admins: @lodion@aussie.zone and @Nath@aussie.zone

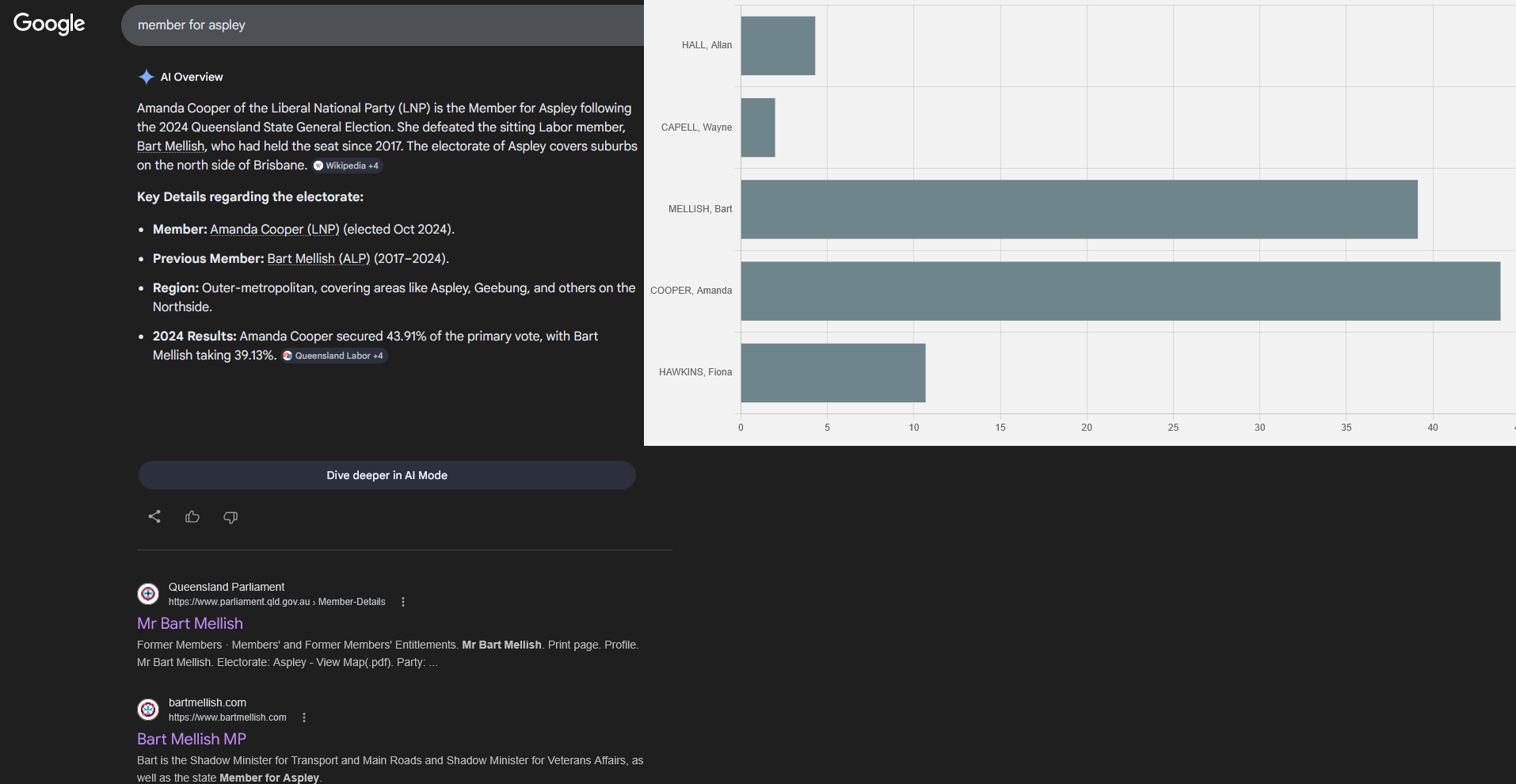

Stop looking to LLMs for answers

You don't look for it. Google shoves it right there where it's unavoidably the first thing you see.

I know, you should stop using Google

I wonder if you'd receive the correct answer if you went to Gemini directly? I tried the same thing with Lumo, which uses open source models and is usually less accurate than the major players, and it reasoned its way to the correct answer at the first time of asking. My understanding is that that Google's AI Overview is not quite the same thing as Gemini, so perhaps it is more error prone too. I haven't used Google Search for a long time and didn't realise the AI Overview was still this bad.

Here's the reasoning output from Lumo:

The user is asking about the "member for Aspley" - this appears to be a reference to a political representative for the electoral division of Aspley. This could be referring to:

A federal electorate in Queensland, Australia (Aspley is a suburb in Brisbane)

A state electorate in Queensland

Given the user mentioned they live in Adelaide, South Australia, they might be asking about Australian politics. Aspley is indeed a federal electorate in Queensland, not South Australia.

This is a factual question about current political representation. Since my knowledge cutoff is April 2024, I should use web search to get the most current information about who currently holds this position, as elections and appointments can change.

Let me search for this information.

Oof. Lumo wrong too, in a different way. There's no federal electorate of Aspley. The suburb of Aspley is in Lilley and Petrie.

I guess it can make sense if you interpret those lines as referring to the federal electorate which contains the suburb of Aspley, as opposed to a federal electorate called Aspley. The answer was fine so we'd have never known about that possible hallucination if I hadn't checked the reasoning output!

Bart Mellish is the current Member for Aspley in the Queensland Legislative Assembly. He represents the Australian Labor Party (ALP) and has held this position since 2017. His electorate office is located at Shop 8A, 46 Gayford Street, Aspley QLD 4034.

Expecting understanding of concepts from LLMs is a category error.